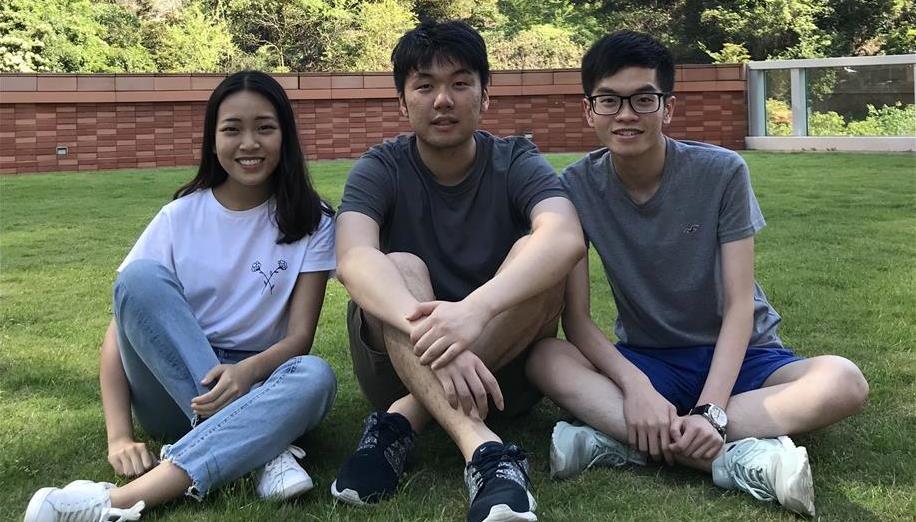

2018 World Finalists

2018 Asia Pacific Regional Finals

Malaysia

Do you know that pineapples are ranked third-most important, and second-most exported tropical fruit in Malaysia? Before shipping the fruits, exporters have to determine the quality of pineapples by using a refractometer, which also means that a number of fruits are subjected to destructive analysis, leading to wastage. Our team developed a sensing device with IoT that integrates with machine learning using Azure cloud computing that can predict the sweetness of pineapple. With the device, we hope can help the pineapple industry improve efficiency and reduce wastage in Malaysia. Besides that, we hope our device can enable farmers to evaluate the pineapples’ optimal level of ripeness in a non-intrusive manner, before being harvested.

2018 United Kingdom National Finals

United Kingdom

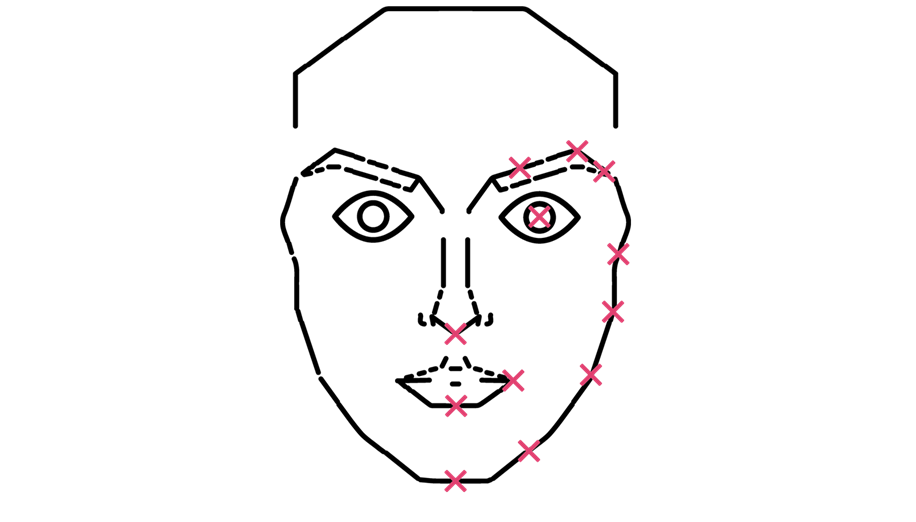

InterviewBot is a web-based application tailored to aid students with video or physical interviews when applying for jobs. As we are second-year students and were seeking Internship placements, we could not come across a tool that would help us prepare for interviews. InterviewBot therefore gives real-time feedback on interview-style questions. The motivation behind this project stemmed from our own personal experiences. Pranay had recently conducted his first telephone interview with a firm regarding a summer internship. Upon completion of this, he had realised that he had forgotten to mention a few key points! Furthermore, as it was his first encounter with such an experience, he was unsure as to how he had performed. With Sam and Roneel also looking into Summer internships and opportunities, we believed that InterviewBot would not only benefit us personally, but would have a similar impact on other students in our position as we were unaware of a system that offered the same services! InterviewBot was designed as a practice interview session. Therefore, the computer would ask interview-style questions, and based on your facial expressions and response (through the webcam and microphone), the application would report on different levels, including levels of emotion and camera feedback. InterviewBot was built on 3 Cognitive Services: 1) Emotion API: This reported on levels of positivity and negativity based on the users response. It would do this through grabbing a screenshot of the users face (through webcam) and would analyse it. 2) Bing Speech API: This API had converted the speech to text. Therefore, the user would get a written transcript of their interview once the session was finished. This is extremely useful as users can track back to where they may have felt a lack of confidence or they may use it to remember questions that were asked. 3) Text Analysis API: This API was used to give feedback on speech sentiment and report it back to the user in real time. Although the Microsoft Challenge had stated that we should aim to use 1 of the API’s within the Vision Cognitive Service package, we decided to broaden our knowledge and throw ourselves outside our comfort zone. As second year students, we haven’t been exposed to such levels of complex API’s however, after researching and thoroughly walking through the API documentation, we managed to successfully implement not 1, but 3 of the API’s which all ran in parallel! InterviewBot is structured so that each user has their own account. This is accessed through a login page upon visiting the website, using a HTML and CSS template, and our MySQL server, using PHP for parsing and sessions. Another key feature that we had implemented was a “remember” list. This allowed the user to write a list of points they wish to mention during their interview. These are then automatically ticked off the list when mentioned. We then gathered some common interview questions and had the application loop through these in a random order. These were then read out to the user (through the users audio) using the HTML5 SpeechSynthesisUtterance API. After speaking with various people throughout the Hackathon and collectively condensing their ideas, the expansion of this concept is almost limitless. Not only can this be a tool for students who can get real-time feedback on their performance, but companies can also use it to assess their candidates’ performance, with the written transcript allowing employers to dissect the interview in detail. A YouTube video highlighting the application working can be through this link: https://www.youtube.com/watch?v=xAHucFbN_c8 Not only did we win an Xbox One X (each!) but we had the privilege of writing an article on Microsoft's Developers Blog. The link for this can be found below: https://blogs.msdn.microsoft.com/uk_faculty_connection/2018/01/04/oxfordhack-winners-of-the-microsoft-cognitive-challenge/

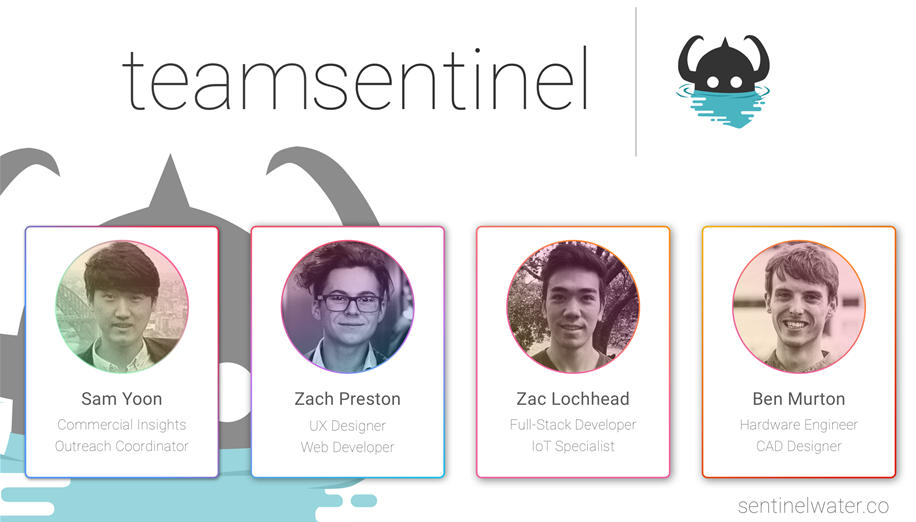

2018 ANZ Imagine Cup Regional Finals

New Zealand

Sentinel is an intelligent IoT tool that reimagines the way we manage water supplies. With a complete toolkit with everything from autonomous supply management, to sustainability analytics and cost insights, Sentinel strives to solve the real problems faced by water users from residential to agricultural!

Final nacional de Estados Unidos de 2018

United States

Pengram is an AR/VR platform which allows engineers from around the world to be holographically ‘teleported’ into a workspace when needed. For example, if an operator that is repairing a $100,000 medical device needs help, they will be able to wire into our service, and using a Hololens, they will be able to watch an expert on that device perform the repairs in 3D.

2018 Poland National Finals

Poland

What’s a problem ? The main problem we are trying to solve is lack of opportunity to help divers immediately when they are underwater. Diver can be easily separated from his partner for many reasons. He can get hooked onto an object and be unable to move, or take interest in surrounding environment and lose the sense of direction in an unclear water. Following situations can have tragic consequences, like causing diver death. Normally, in the situation when a group of divers lose one of their partners, they should look for disappeared diver for about a minute and then resurface to discuss next moves. This procedures, however aren’t enough and mostly end up in failure because they take to much time. Fortunately, we have come up with a perfect solution. What’s our solution Our product is a Diving Localizer. This is a small device that can be attached to a diver. The most basic feature of our product is ability to locate position of the diver in three dimensions and send it to the person overwatching him from the boat or shore using our mobile app. This way, diver can be easily found by other members of the group. In case of emergency diver can send SOS signal by pressing a button on our localizer. By doing it he can alert the person overwatching him, when he loses contact with a group, has problem with oxygen or if any other dangerous situation emerges. Additionally to functions improving divers safety, our device offers a series of extra features which can bring the diving experience to a whole new level. One of them is Diving Logbook. Logbook keeps record of the diver’s underwater adventures like route he took, water depth he was on and its temperature. This allows to record and share diving experiences with friends and family. Moreover, diving localizer can be used by diving bases.It allows them records the most popular routes and places and advertise them to their customers, which can help to improve their business. It can be also useful for diving instructors training new divers to make learning experience much safer. Moreover it is a good feature in their offer that can make them more competitive and give them more clients in the future. Our device releases a person who coordinates a trip from obligation to dive with rest of group. He can track them on the screen of his phone better than looking on them using his eyes underwater.

2018 Central Europe Regional Final

Germany

Our project Soul Sailor supports refugees dealing with mental illnesses like PTSD or depression by providing psychological care and eliminating factors out of their surroundings which contribute to their issues. To do so we provide our user with the AI and data driven digital companion Mayu who interacts with the user via speech and helps the user to express his/her experiences and come to Mayu with his/her worries. Furthermore, we establish the connection between fled relatives who have been separated with our novel approach of an event based network. But how did we get the idea? Tushaar was travelling in the train from Munich to Mannheim and started speaking with his seat neighbour, who turned out to be a refugee travelling to his brother. He had fled from Aleppo and started speaking about how he travelled 3000 km alone as a 15-year-old. He had lost his sister during his journey and had travelled through 10 countries. His story was truly heart-braking. Just before getting down in Stuttgart the refugee thanked Tushaar because this was the first time he was able talk to somebody what all he has been through. And this inspired us to get together and give refugees the opportunity to deal with their experience and the resulting psychological trauma. This video elaborates upon this: https://youtu.be/AngGxwR54-c . Our Website https://soulsailor.tech

Germany

NASC is a web app that allows you to search for news articles on the web, attempts to evaluate sentiments in those articles and visualizes the results. Our goal is to encourage people interested in politics and other important topics and events to go beyond the first search result and to look at multiple articles that express different views about the same topic. To achieve this, NASC offers the following three result views: (1) Map View: The Map view helps understanding geographical differences in the attitude towards a given issue. Articles are shown as colored markers on an embedded Bing Maps Control, indicating location and sentiment of the article. (2) Timeline: The Timeline view visualizes the development of the sentiment towards an issue over time. Articles are displayed as colored points in a 2D scatterplot with time on one axis and sentiment on the other. (3) List: The List view provides a familiar user interface similar to regular search engines and shows more details at first glance than the other, more specialized views. | How it works: NASC is an ASP.NET web application with a C# backend, hosted on Microsoft Azure. It uses the webhose.io news search to find relevant articles, and Microsoft Text Analytics to evaluate their sentiment.

Germany

Pavo Vision makes digital content accessible to visually impaired users, by utilizing advanced AI, Cloud Computing and the power of the community. Visual content in websites, documents, and other digital assets gets analyzed and equipped with a description of the visual for visually impaired users by the Pavo Vision System. Mistakes in the analysis can be reported by the Pavo community to train the system - our models will get smarter over time using this crowdsourcing approach to generate more accurate descriptions. The Pavo Vision system is currently available as a browser plugin. We are extending Pavo with a Slack plugin right now. Our backend is designed to support a wide variety of new applications and services (e.g. an app for the use on mobile devices).

Germany

StudySmarter is an intelligent learning platform, empowering every student to achieve their educational goals and graduate from university. Our platform digitizes the entire learning process, making it more efficient, structured and engaging. Machine learning algorithms accompany the student through the entire learning experience by automating or creating learning materials such as summaries, mind maps or flashcards with just a few clicks. In addition, the student is automatically connected with fellow students, studying the same subjects and receives individual, additional supportive content recommendations based on preferences. StudySmarter not only saves time in learning, but also boosts motivation, for example through extensive statistical features providing the student with valuable feedback on their learning process. Furthermore, gamification features guarantee moments of success during the exam preparation. We developed our WebApp with Angular5 and host it on Microsoft Azure. Our Django backend employs a PostgreSQL database also hosted on Microsoft Azure. In order to automatically recommend supportive resources - like online tutorials - to the students based on their uploaded documents, StudySmarter makes use of the Bing Video Search API. In the long term, we are convinced that our product can not only support students across the world, but also be used in the context of corporate learning and secondary education. Turning our of empowering everyone to achieve their educational goals into reality.

2018 Russia National Finals

Russia

Spectrometry has been used by physicists to study the properties of matter for more than a hundred years. Various scientific researches are carried out, quality control on production is carried out. In ecology, spectrometry is used to determine heavy metals in soil and water bodies. In metallurgy and chemical industry allows to control the quality of raw materials. In the jewelry industry spectrometry is necessary to measure the concentration of precious metals. It is well known that the measurement of spectra can be dated archaeological finds. We propose to use this amazing tool in the business for labeling of tradable goods. The method can be used in a variety of ways: from counterfeit inspection in the task of comparing the goods with the standard to quality control of large supplies. On the other hand, we can keep track of exclusive items such as expensive wines. At the moment, we conducted research for wine and coffee and were convinced of the high efficiency of the method. With our project we reached the world finals of Microsoft Imagine Cup, which will be held in Seattle at the end of July. By this time, we plan to assemble a compact device for measuring spectra and go beyond laboratory measurements. Next, we need to build a much larger database of spectra of substances. Try new types of products. We can potentially explore drugs, although this is a much more responsible job.

2018 Japan National Finals

Japan

According to the WHO, around 466 million people worldwide suffer from hearing disabilities, of which 34 million are children. A common misconception is that all people with hearing impairments hardly hear anything, but many actually hear a confusing cacophony of noise, making it difficult to focus on specific sounds or voices. This can make everyday outings to noisy environments such as restaurants or cafes exceedingly difficult. Mediated Ear provides an elegant solution to this challenge. Using deep learning, Mediated Ear can isolate any voice from a mixed audio source containing various noises and sounds, including multiple speakers. With Mediated Ear, anyone with a hearing impairment can easily tune into the voice they want to hear, and filter out distracting ambient noise. While existing solutions only work on highly specialized and expensive equipment, Mediated Ear works on any smartphone. Once Mediated Ear has learned a person's voice, users can use the companion smartphone app to effortlessly select, isolate and wirelessly transmit it to their earphones. To accomplish this, Mediated Ear only needs to listen to the target person speaking for 1 minute. The recorded audio is then quickly processed in the cloud, where a neural network learns to extract the target’s voice. Once training is complete, the neural network model is sent back to the smartphone app, enabling instantaneous on-device voice isolation. Mediated Ear could also be incorporated into standalone hearing-aid devices or earphones. Besides aiding those with hearing impairments, this could benefit ASD (Autism Spectrum Disorder) patients, who often suffer from oversensitivity to ambient noise. Mediated Ear could also improve safety in hazardous environments such as factories, construction sites, and airports, by allowing workers to filter out distracting noises. As such, Mediated Ear has the potential to empower not only those with hearing impairments, but also to truly "Empower us all".

Japan

EFFECT is an artificial intelligence system that automatically feeding sea surface farming such as red sea bream and pseudocaranx dentex. It realize optimal automatic feeding from the arrival of fry fish to the shipment. It is realized from three steps. First,Determining how to feed during the period from arrival of fry fish to shipment. Second,Determine the optimal feeding time and feeding amount. Finally, Judge the fish activation status and continue feeding or stop feeding. These are realized with artificial intelligence using machine learning.

Japan

Emergensor is a web service which provides security information for people living in conflict-affected areas through mobile application. Conflict has been a critical issue our society is facing today with a lot of innocent victims suffering from it. People who are living in conflict-affected regions are always living in fear of incidents such as bombings and gunfire, and are always facing risks - one is on safety and security. Thus, security information is really essential for their lives. However, despite this need, government in conflict-affected regions mostly do not share emergency information immediately. Thus, people use Social Networking Services such as Facebook, Twitter, or Instagram to share the information of incidents. It is, however, not the perfect solution. Information through SNS is usually delayed. That is why we need to find the measure to immediately share security information to and for everyone. Emergensor focuses on people’s behavior to provide immediate information because it must show obvious difference in the case of emergency. Emergensor has the technology which connects acceleration data and behaviors through machine learning. To get data from accelerometers of smartphones is a practical choice because it consumes less battery and have the least privacy issue as compared with the apps which always use GPS or microphone. In addition to that, it doesn’t send much data to the server therefore it works with 3G networks. Furthermore, Emergensor analyzes collective people’s behavioral data in the case of emergency. It always evaluates how unusual the user’s current behavior is and creates people’s behavioral maps when it detects users’ unusual actions. Henceforth, it has the potential to automatically analyze the behavioral maps through deep learning to share appropriate information to users. There have been few experiments for accelerometer in the Philippines especially for people in conflict-affected areas and almost no companies have tried to evaluate how unusual the user’s current behavior is. Additionally, it is a very rare attempt to analyze the behavioral data collectively. MyShake (http://myshake.berkeley.edu/), a free app to recognize earthquake by using accelerometer is the closest project; however, MyShake is purely for earthquakes. We have already developed an Android application and the mobile backend (server-side application) by utilizing Azure after conducting interviews with Filipinos who have been involved into conflicts. Emergensor Official Website: www.emergensor.com

2018 India National Finals

India

RealVol features a virtual reality (VR) based immersive walkthrough inside a CT or MRI volume. It uses an advanced volumetric algorithm to directly convert 2D CT/MRI scan images to 3D volumes for interactive visualizations. RealVol can provide complete walkthrough in the scan(currently with HTC Vive, Hololens in the future). And now it is possible for doctors and students to look at these volumes on their Android and iOS mobile phone, that too in 3D VR using Google Cardboard and HTC Vive.

2018 Taiwan National Finals

Taiwan

We are Biolegend and we would like to show you our new product "BioKnee". BioKnee provide a brand new solution toward rehabilitation through mobile application, software system, data storage database, wearable devices and AI computing platform(Azure). We are aiming to bridge the gap between the rehabilitation practitioners and the clinical specialty, and link the clinical professional settings with the patient's actual rehabilitation records then feed it into the system for AI analysis. "Bioknee" is also a rehabilitation communication system that can provide clinical therapists and patients a well-communicated platform, and generates personal database during the procedure. Predict the cured time through Machine Learning, and also motivate the patient to rehabilitate. Use "Bioknee", no longer suffering from the pain points of the clinical professional gap.

2018 China National Finals

China

Our team overcomes the difficulties of quantitative diagnosis of Parkinson’s disease. Combining deep learning with signal processing, we utilize the convolutional neural network to extract features from Parkinson’s disease patients’ surface electromyography and then grade their states according to a nonlinear classifier. With this method, doctors are able to give the right therapy to patients. Meanwhile, our diagnostic instrument is small and light, easy to be used both in hospitals and at home. Its portability makes it a highly useful and practical invention.

China

Attention it is a project, made up of smart hoop, toy car and an APP, to cure kids with ADHD through game. Kids can use their brain waves to operate the toy car, training their ability to focus. The APP can provide the results of early examination, real-time detection and adjuvant therapy. It is not only a wonderful combination of brain computer interface and deep learning, but also a breakthrough in the application of Recreation Therapy concept, achieving painless and convenient diagnosis and treatment.

2018 Hong Kong National Finals

Hong Kong SAR

Tale is a presentation coach which gives feedback to the users on a rehearsal of a presentation or speech they are preparing. Nowadays, delivering a presentation is very common and essential in our daily life. From finding a job, presenting a business idea at work to giving a presentation for your school project, every day we face challenges to deliver a good presentation. Our solution is to provide a professional artificial intelligence to help people justify their presentation video performance anytime and anywhere. The professional AI considers different presentation dimensions and analyses the video. After that, the AI gives instant feedback to the users, showing analytics results and suggestions.

2018 Middle East and Africa Finals

Pakistan

Pakistan is ranked highest in child birth fatalities. Intrauterine deaths and stillbirths are very frequent in rural areas of Pakistan where there are lack of proper medical facilities. There is an urgent need for a solution to capture the vitals and fetal condition of the pregnancy and communicate them to the remote consultants who can take immediate actions in order to avoid stillbirths. It is quite difficult for the parents to be able to travel from rural areas to the cities to see the doctors or have an ultrasound many times in a month to know how their unborn child is since the parents have to wait for the appointments. Complications may arise in between periods of appointments which the parents may be unaware of since the fetal condition is not being monitored on a regular basis. Fe Amaan is a wearable belt, which regularly monitors fetus’s health through the use of IoT sensor device which is to be placed on mother’s abdomen. The device captures the fetal heart rate and movement and sends it via Bluetooth to the mobile application. The data received will be analyzed and displayed, enabling the doctors to know about the health of the fetus. The main features of this system is to provide automated analysis of fetal health on regular basis without providing any harm to both the mother and the child. In case of anomalies in heart rates and movement patterns, this system generates timely alerts so that precautionary measure could be taken before it is too late. As the system makes remote monitoring of the fetus possible, it aims to reduce the high rate of intrauterine deaths and stillbirths in Pakistan. After the mass production, it will be used by lady health workers for monitoring the fetal conditions of expecting mothers residing in rural areas.

Turkey

Proland is a machine learning solution aimed to solve inefficiency problem on agriculture and agriculture related activities. As a team who has a lot of farmer relatives, we observed that many farmers decide what to plant without considering what is more appropriate for their land, both economically and environmentally. Usually, they select one of the most profitable crops of last year or they regularly plant the same plant across generations without realizing effects of global warming on their soil and climate. Moreover, since almost everyone focuses on planting same products on small villages due to economic concerns or habits, lots of crops are wasted or sold to incredibly low prices and many products are transported from far regions even though their own farms are convenient to produce these products. Our ML model is built on Azure Machine Learning Studio using historical temperature, precipitation and ton/acre yield values. We tested our current model for different regions of Turkey and predicted the quantity harvested with 92.5 accuracy for different crops. Our cross-platform (UWP-Android and iOS) mobile application is currently developed using Xamarin.Forms. Visit our web page: www.prolandfarming.com

Egypt

The solution aims to make sea swimming much safer by eliminating the danger of drowning. It comes as a smart integrated IoT solution providing interactive real-time monitoring and supervision dashboard on swimmers’ state and location through lifeguard mobile app and smart wearable devices worn by swimmers, Quick accurate drowning detection technique using biometrics and then finally an immediate automated rescue procedure using a portable flotation device and an autonomous drone carrying a buoy. Moreover, it provides the customer with a web portal for data management and a BI insights dashboard.

2018 LATAM Regional Final

Argentina

Lexa is a platform developed to save people’s lives and end medical prescription fraud. We combine blockchain technology with advanced machine learning to provide a reliable and secure solution.

Mexico

RedBeat is a monitoring system through a pocked sized IoT device that measures, real time, the vital signals of a patient, such as: temperature, humidity, heart rate, blood oxygen and heart electrical activity. RedBeat collects the data, and automatically sends it to a dashboard shared with your doctor, all powered by Azure Cloud. More than just a monitoring system, Redbeat is a smart control system that prevents any heart attack or stroke, thanks to the Machine Learning incorporated.

Brazil

In our planet, there are 253 million people visually impaired, out of which 38 million are completely blind according to World Health Organization (WHO). 81% of visual impairment could be avoided if diagnosed and treated early. US$ 102 billion could be saved with appropriate eye care services and prevention. ADAM Robo is a visual acuity 5-10 minutes screening software used to test and prevent common vision problems. ADAM uses guided bot conversation along with an individual anamnesis and vision tests which can identify possible refractive errors such as myopia (nearsightedness), hyperopia (farsightedness), presbyopia (loss of near vision with age), astigmatism, Daltonism (Color blindness) as well as Cataract, the leading cause of avoidable blindness. We are aligned with United Nations (UN) Sustainable Development Goals number 3 (Good Health & Well Being) and 4 (Quality Education). We have impacted 550k people to date and forecast the impact on 3 million lives in 2018. That number can be much bigger if we establish the right partnerships.

2018 Canada National Finals

Canada

We created SmartARM, a function robotic prosthetic for the hand. Using Microsoft Azure's Computer Vision, Machine Learning and Cloud Storage, we created a robotic hand that uses a camera embedded in the palm to recognize objects and calculate the most appropriate grip for the object. Using Machine Learning, the more the model is used the more it improves. Since all data is stored on the cloud, the trained model stays with you even when you switch devices.

2018 France National Finals

France

Our ambition is to make theater accessible for everyone, be it deaf or hearing-impaired people, foreigners, and even locals who wish to have subtitles, with a simple software available on smartglasses, smartphone or tablet which will display the subtitles in the language chosen by the user, because in Thetrall, we believe theater is for all !

2018 Hungary National Finals

Hungary

We are developing a smartphone application, which will provide help to understand the curriculum from the already existent books. What we experienced as a student is, that for many kids it gives hard time to for example imagine shapes which has spatial extent when the given illustration is in 2D on paper. It’s easy with the common shapes, but as we continue going further in the curriculum we will meet with dodecahedron for example, which is harder to explain. The solution for the problem in our case is an application, which with the help of our smartphone projects a 3D object on the book’s illustration, which we will see on our phone’s screen in AR. We can walk around the object, and it’s spatial appearance helps to understand the calculation bases, and last but not least (based on feedbacks) the kids like it and they use it also. We optimised it for almost all of the primary school subjects, such as: biology, history, geography, chemistry, etc. We would like to create a shared platform, which we would use the Microsoft Azure Cloud Service Tools for. Through this service the user will be able to create their own list. They could choose which object will be shown on a chosen picture. After the chosing, with an identification code you will be able to reach it in the application.

Hungary

We are developing a smartphone application, which will provide help to understand the curriculum from the already existent books. What we experienced as a student is, that for many kids it gives hard time to for example imagine shapes which has spatial extent when the given illustration is in 2D on paper. It’s easy with the common shapes, but as we continue going further in the curriculum we will meet with dodecahedron for example, which is harder to explain. The solution for the problem in our case is an application, which with the help of our smartphone projects a 3D object on the book’s illustration, which we will see on our phone’s screen in AR. We can walk around the object, and it’s spatial appearance helps to understand the calculation bases, and last but not least (based on feedbacks) the kids like it and they use it also. We optimised it for almost all of the primary school subjects, such as: biology, history, geography, chemistry, etc. We would like to create a shared platform, which we would use the Microsoft Azure Cloud Service Tools for. Through this service the user will be able to create their own list. They could choose which object will be shown on a chosen picture. After the chosing, with an identification code you will be able to reach it in the application.

2018 Belarus National Finals

Belarus

The premise of this wonderful collaboration work is to help companies hire the right students by sophisticated data-driven algorithms based on psychology, face/gestures-recognition and company culture assessment. The algorithms can also be used to help out the service industry to better communicate with customers based on psycho-type and emotion detection in real time.

2018 Greece, Cyprus and Malta Regional Final

Greece

iCry2Talk proposes a low-cost and non-invasive intelligent interface between the infant and the parent that translates in real time the baby’s cry and associates it with a specific physiological and psychological state, depicting the result in a text, image and voice message. The hypothesis adopted by iCry2Talk is that the efficient combination and analysis of different sources of information through advanced signal processing techniques and deep learning algorithms can provide meaningful and reliable feedback to the parent related to the care of his baby.

2018 Central Eastern Europe Regional Final

Moldova

Our solution is based on neural networks. We provide the translation with video capturing. We now have up to 100% accuracy. We want to use our solution at multifunctional and trading centers, and also embed into social networks and helping robots. We are now negotiating with equipment providers, business areas, and software developers.

2018 Romania National Finals

Romania

XVision is a system designed to automatically detect anomalies and diseases encountered anywhere in the human body with radiologist-level accuracy, just by analyzing common medical X-ray images with the help of the latest Azure technologies such as Machine Learning and Azure Functions. Our product will provide a much needed solution for people in areas of the world that lack access to radiology diagnostics while also acting as an assistant tool for the medical experts examining radiographies.